What is an Algorithm in Computer Programming: People ask this type of question What is the algorithm in programming? so, its simple answer is An algorithm is a set of instructions that a computer can use to carry out a given operation or solve a specific problem in computer programming. Algorithms are crucial parts of software that are used to assess the capabilities and restrictions of a certain application.

What is Algorithm in General Terms?

In mathematics and computer science, an algorithm is an unambiguous specification of how to solve a class of problems. Algorithms can perform calculations, data processing, and automated reasoning tasks.

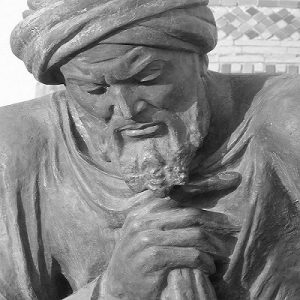

The name of the mathematician who authored a treatise on arithmetic methods in the ninth century, Muhammad ibn Musa al-Khwarizmi, is whence the word algorithm originated. Algorithms are utilized in a broad variety of applications today, including logistics algorithms that improve delivery routes and social media algorithms that control which postings appear in your feed.

Define algorithm in computer programming: A computer follows a prescription known as an algorithm to solve a problem. A set of instructions that walk the computer through each step are included in the recipe. The computer follows these instructions until it finds a solution or finishes the task.

Related: What’s New in EC Council Certified Ethical Hacker Course v12

Learn What is an Algorithm in Computer Programming

Algorithms are used by programmers to handle complicated issues in a methodical and effective manner. An algorithm can speed up the process of addressing a problem by dissecting it into more manageable, smaller parts.

The effectiveness of an algorithm is among its most critical components. An effective algorithm completes a task as quickly and with the least amount of resources as possible. This is crucial because it enables programmers to create software that solves issues fast and effectively without squandering resources or effort.

Algorithms are crucial to computer programming, to sum up. They are employed to carry out jobs effectively and methodically. Programmers may design software that is faster, more effective, and more efficient than ever before by knowing algorithms. Whether you are an experienced programmer or just beginning to learn about computer science, algorithms are an interesting and vital topic to examine.

Why is it called an algorithm?

The word algorithm comes from the Iranian mathematician Muhammad ibn Ms al’Khwrizm, who lived in the ninth century. His Latinized name, Algoritmi, was used for centuries to refer to the decimal number system and had this connotation.

Who is the Father of Algorithm?

Muhammad ibn Musa AL-Khwarizmi

About Muhammad ibn Musa AL-Khwarizmi

Father of Algorithm Muhammad ibn Musa AL-Khwarizmi: Muhammad ibn Musa AL-Khwarizmi was a Persian mathematician, astronomer, geographer, and scholar in the House of Wisdom in Baghdad. He is famous for his pioneering work in algebra, which includes introducing the concept of variables in solving equations and providing a systematic method for solving linear and quadratic equations. He also authored works on astronomy, geography, and trigonometry, which were instrumental in advancing science and mathematics during the Golden Age of Islam. His contributions to mathematics have been so significant that his name has been used to coin the term “algorithm.”

How Algorithms Work in Computer programming?

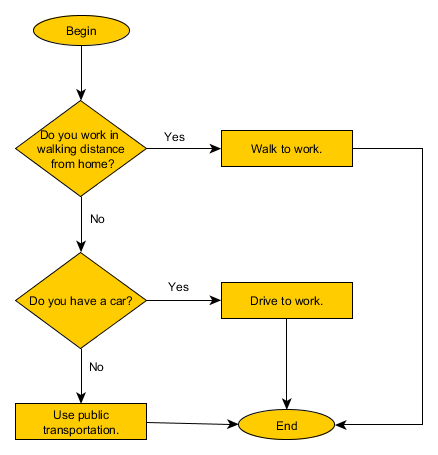

the algorithm in computer programming is a set of instructions that guides the computer to solve a specific problem or perform a certain task. It is a step-by-step procedure for carrying out a particular task.

Here are the basic steps that make an algorithm work:

Defining the problem: First, the programmer must identify the problem that needs to be solved. This may involve clarifying the task, outlining the requirements needed to complete the job, and identifying any constraints that might affect the solution.

Designing the algorithm: Next, the programmer designs a series of steps that will solve the problem. These steps may include mathematical calculations, logic structures, and decision-making processes.

Writing the algorithm: After designing the algorithm, the programmer writes it using a programming language such as Java, Python, or C++. This involves converting the designed steps into a sequence of instructions that can be executed by a computer.

Testing the algorithm: Once the algorithm has been written, it needs to be tested to ensure it generates the correct output for different inputs. This may involve running the algorithm with different data sets or test cases and examining the results.

Refining the algorithm: If issues are identified during testing, the programmer may need to refine or tweak the algorithm so that it can work effectively and efficiently. This sometimes requires re-writing the program.

Implementing the algorithm: Finally, if the algorithm is successful, it can be implemented into a software application, website, or program as part of the solution to the problem.

In conclusion, algorithms play an important role in computer programming as they help programmers solve complex problems, automate processes and perform tasks efficiently.

What is Algorithm in Computer with Example?

An algorithm is a step-by-step process for solving a problem or completing a task, typically expressed as a set of instructions designed to be carried out by a computer. Here is an example of an algorithm:

Problem: Find the sum of all even numbers between 1 and 100.

Algorithm:

- Initialize a variable called “sum” to 0.

- Start a loop that runs from 2 to 100 (incrementing by 2 each time).

- Within the loop, add each even number to the “sum” variable.

- When the loop finishes, output the final value of “sum”.

The resulting program will iterate through the range of 2 to 100 to find all the even numbers. Each even number found will be added to the “sum” variable. Once the loop completes, the final value of “sum” will be outputted, which will be the sum of all even numbers between 1 and 100.

What are the Features of the Algorithm?

- Input: An algorithm must have one or more inputs that are required to produce the desired output.

- Output: The algorithm must produce a definite, unambiguous output that can be easily understood by humans.

- Definiteness: An algorithm must be definite in nature, i.e., it must be unambiguous and clear.

- Finiteness: An algorithm must be finite in nature, i.e., it must terminate after a finite number of steps.

- Correctness: An algorithm must produce a correct output for all possible inputs.

- Efficiency: An algorithm must be efficient, i.e., it must require minimal time and resources to produce the desired output.

- Generalization: An algorithm must be applicable to a wide range of problems and must not be limited to solving a specific problem.

How do You Determine a Good Algorithm?

What is the Identification of a good algorithm: Here are Some general characteristics of algorithms that are typically considered good.

- Efficiency: A good algorithm should be efficient, meaning that it should take the least amount of time and resources possible to accomplish its task.

- Correctness: A good algorithm should produce the correct output for any given input.

- Clarity: A good algorithm should be easy to understand, read, and modify.

- Scalability: A good algorithm should perform well on large datasets and not break down under heavy workloads.

- Robustness: A good algorithm should be able to handle input errors or unexpected situations without crashing.

- Reusability: A good algorithm should be reusable, meaning that it can be used in multiple programs or applications.

- Maintainability: A good algorithm should be easy to maintain, meaning that it can be updated, improved, or repaired without too much effort or cost.

- Flexibility: A good algorithm should be flexible, meaning that it can be adapted to different use cases or requirements.

What are the Types of Algorithms in Computers?

There are several types of algorithms in computer science, including:

- Brute Force Algorithms: These algorithms use a trial and error method to find the solution to a problem. It is often used for small problems with a limited number of solutions.

- Divide and Conquer Algorithms: These algorithms divide the problem into smaller subproblems and solve them recursively. This method is used for complex problems.

- Dynamic Programming Algorithms: These algorithms solve optimization problems by breaking them into smaller overlapping subproblems.

- Greedy Algorithms: These algorithms make the locally optimal choice at each stage with the hope of reaching a global optimum.

- Heuristic Algorithms: These algorithms use a rule-of-thumb approach to solve problems that are difficult or impossible to solve using conventional methods.

- Genetic Algorithms: These algorithms simulate the natural selection process to optimize a solution to a problem.

- Backtracking Algorithms: These algorithms solve problems by trying out all possible solutions and undoing failed attempts until a solution is found.

- Randomized Algorithms: These algorithms use randomization to solve problems, which can produce unpredictable and unexpected results.

Algorithm to Find Factorial of Number – Example of Algorithm?

find the factorial of the number? 5! =5*(5-1)*(4-1)*(3-1)*(2-1)*(1-1)! 5! = 5*4*3*2*1*0! 1. Start 2. Read the number n 3. [Initialize] i=1, fact=1 4. Repeat step 4 through 6 until i=n 5. fact=fact*i 6. i=i+1 7. Print fact 8. Stop

Finally” So in this blog, we can learn step by step “What is an Algorithm in Computer Programming”.

Subscribe to Our YouTube Channel For Awesome Videos and Join Our Telegram Channel For getting free Interesting Stuff.

Related:

Best C Programming Language Online Free Course

Best C++ Programming Free Course with Certificate

Best Free Python Programming Online Course